Executive summary

How AI Is Rewriting Management and Elevating Adaptive Leaders

By Sam Bell, CEO, Institute of Managers and Leaders (IML)

A stark narrative dominates the business press. High-profile technology companies are flattening hierarchies, carving out middle management, and framing AI as the ultimate replacement for human orchestration. The ‘unbossing’ trend paints a grim picture for the future of work.

The reality across the broader Australian economy is far more complex. AI disruption is not a homogeneous force – it moves at different speeds and takes fundamentally different shapes across sectors, companies, and individual roles. For much of the real economy, AI will not drive workforce elimination. It will drive workforce transformation.

That transformation places a new mandate on HR and L&D leaders – one that extends far beyond traditional hiring and capability planning. HR and L&D must now equip their organisations for a shift that is redefining the very nature of management and the capabilities it demands.

Leaders consistently ask how to build capability for a future that remains unsettled. This paper addresses that challenge by drawing on interviews with Institute of Managers and Leaders (IML) members leading AI adoption firsthand across health and aged care, resources and energy, community services, and state government.

Ground-level evidence shows the answer is not technical software proficiency.

Navigating the AI shift demands adaptive skills – the judgement, contextual thinking, and human capability that determine whether an organisation thrives or fractures under pressure.

Adaptive Leadership has become a practical necessity. This whitepaper steps away from the headlines to offer HR and L&D leaders a working framework for leading a workforce through the AI transition.

What you will discover in this paper:

- The Unbossing Fallacy: Why aggressive headcount-reduction headlines fail to match the reality of the broader economy.

- The Agentic Workforce: How management changes when AI evolves from a passive tool into an active participant.

- The Jevons Paradox: Why AI efficiency creates more complex work and demands greater human oversight.

- The Capability Shift: How the value of management is moving from technical tasks toward human judgement, empathy, and adaptability.

- The Adaptive Framework: A structured approach to leadership and decision-making amid constant technological change.

- Six Executive Priorities: Immediate, actionable steps HR and L&D leaders must take to build workforce readiness for a shifting future.

Will artificial intelligence eliminate middle management?

Why is the technology sector aggressively culling management roles?

Block CEO Jack Dorsey announced plans to cut around 4,000 roles – roughly 40 per cent of the company’s workforce.

In a shareholder letter, he wrote: “A significantly smaller team, using the tools we’re building, can do more and do it better. And intelligence tool capabilities are compounding faster every week.”1

The market rewarded the direction. Block’s share price surged by more than 20 per cent on the day the cuts were announced.2

Around the same time, WiseTech Global announced around 2,000 job cuts over 18 months, with its CEO declaring the era of manually writing code as the core act of engineering “over.”3

Soon after, Atlassian cut roughly 1,600 roles and replaced its Chief Technology Officer with two executives described as next-generation AI talent4 – removing the person who would normally manage the technology strategy in the middle of the most significant tech shift in a generation.

What is the 'unbossing' trend and how is AI driving it?

The trend has a name: ‘unbossing’.5 The term has become shorthand for a sweeping argument about organisational structure.

AI, the logic runs, can now handle the coordination, information flow, and administrative connective tissue that justified management layers.

If the machine can synthesise, schedule, report, and escalate – what is the middle manager actually for?

Global aggregate data reinforces the narrative. In the U.S, job postings for middle managers fell 42 per cent from their 2022 peak to late 2025, according to workforce analytics firm Revelio Labs.6

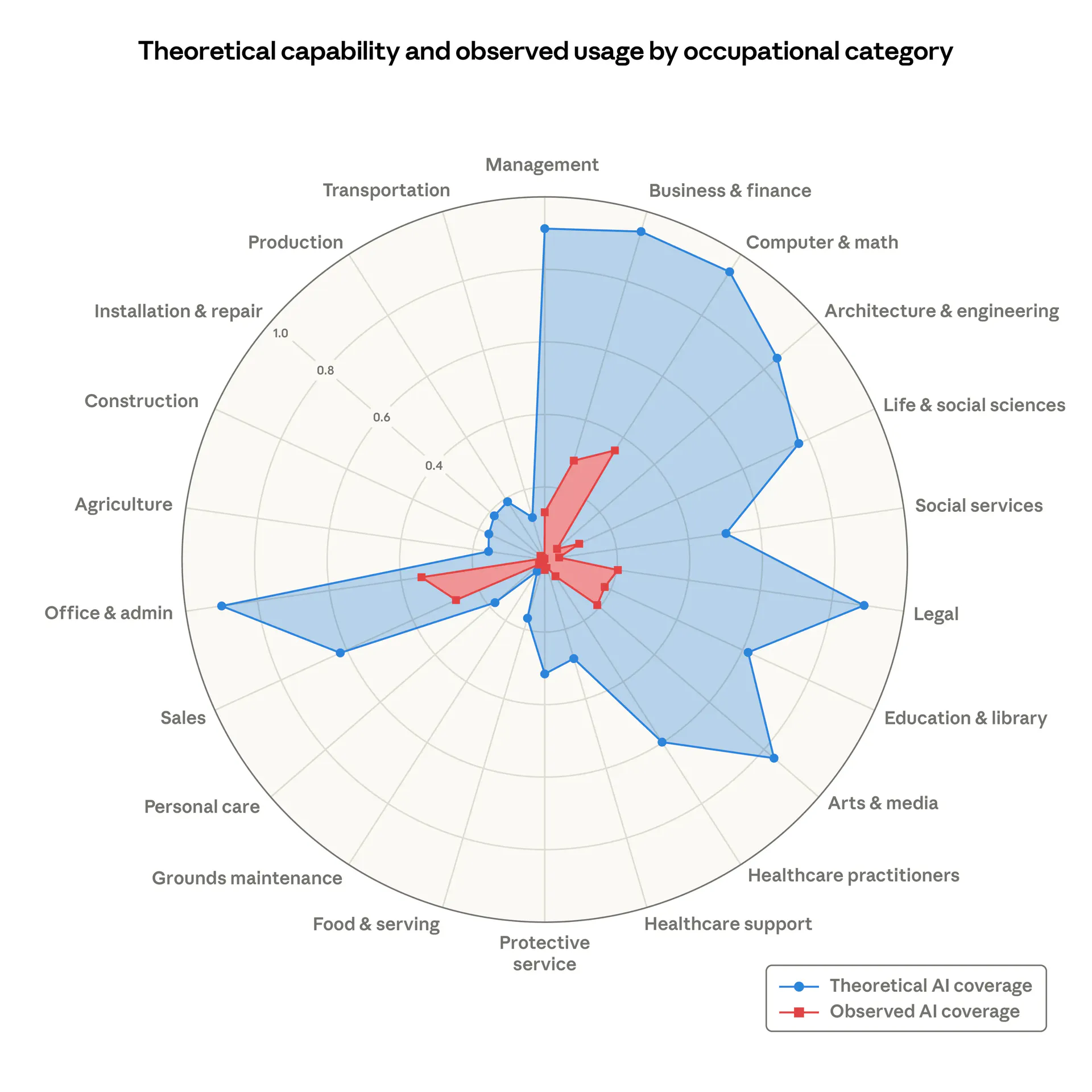

A study published by Anthropic in March 2026 examined AI’s potential impact across occupational categories.7

Professor Clinton Free of the University of Sydney applied the data to Australia’s workforce. Management occupations sit at 92 per cent theoretical exposure – behind only computing, finance, and office administration – translating to more than 1.5 million Australian management roles potentially affected.8

Does the technology sector's unbossing narrative match the reality of the broader economy?

What do current government projections show about the future of management roles?

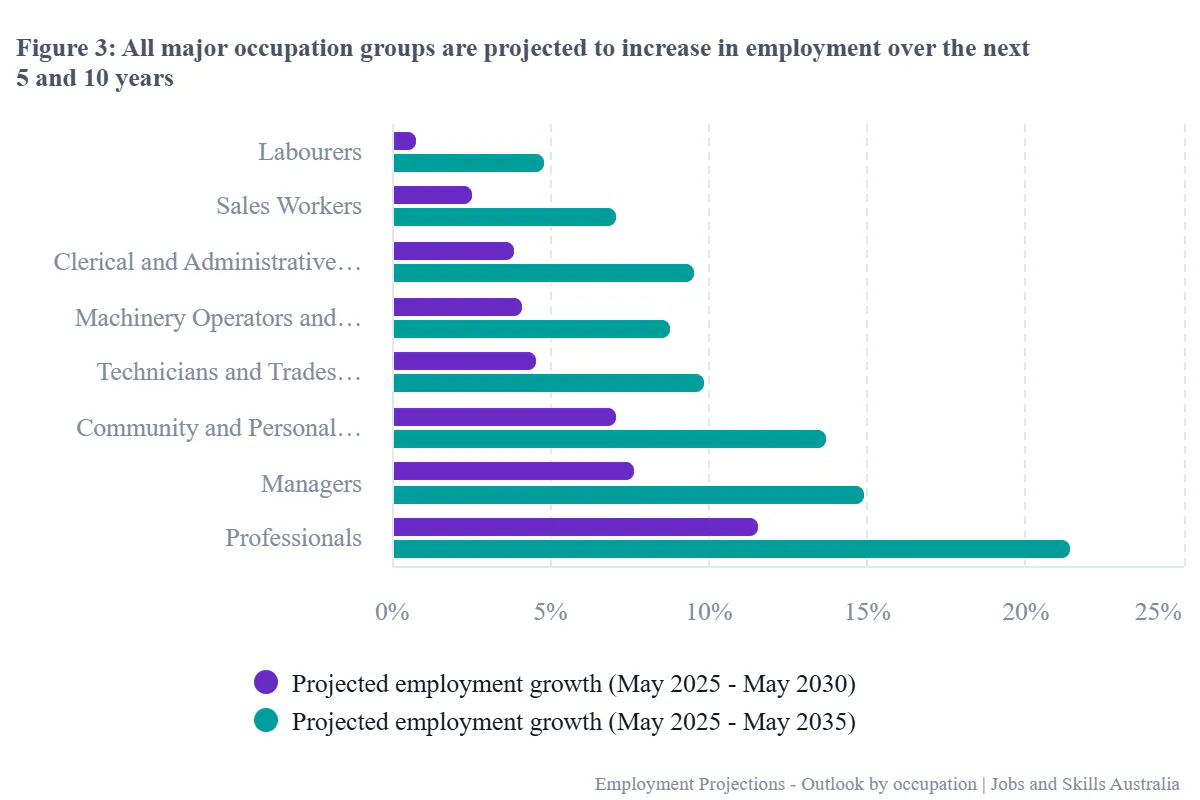

Jobs and Skills Australia projects management roles across the Australian economy will grow by 14.9 per cent to 2035 – an increase of approximately 279,600 positions.9

If government projections are anything to go by, the structural demand for management is not shrinking – it is growing.

This is deeper, more entrenched modelling than the tech sector’s rapid restructures. It likely does not fully account for the AI evolution and workforce cuts of recent months. But it does reflect where the broader economy is heading – and the direction still points firmly toward growth.

How much AI shifts that trajectory is now the question.

How will AI actually impact management in the broader Australian economy?

Most of the Australian economy does not resemble a global technology company – with products in AI’s direct firing line, a culture that rewards disruption, and investors demanding aggressive sharemarket growth.

IML’s own engagement with local industry more broadly, points in a different direction: management roles are evolving, not disappearing.

AI is changing what managers do, not eliminating the need for them. The question is what that evolution looks like – and what it demands.

SANDRA HILLS OAM

CEO, Benetas – Aged care and allied health provider

Aged care’s AI equation: more demand, not fewer jobs

Sandra Hills says Australia’s aged care sector needs an additional 110,000 workers by 2031 and 400,000 by 2050.

For Hills, AI is not a threat to her workforce – it is essential to meeting demand that human recruitment alone cannot fill.

“We’re in a different ball game. We’re in an industry where we can’t get enough staff. We can’t get the right staff and there’s an aging population.

“For us it’s about needing artificial intelligence to deal with the rising number of people that require our care, to support our lifestyle team members in our homes.”

What happens to management when AI becomes an autonomous worker?

How does agentic AI differ from reactive generative tools?

Most people understand generative AI as a reactive tool. A user writes a prompt, the AI generates a response, the user decides what to do with the output.

Agentic AI operates on fundamentally different logic. An agentic system perceives its environment, applies judgement within defined parameters, and can execute tasks autonomously.

A global survey by BCG and MIT Sloan Management Review found 76 per cent of executives who already use it, described agentic AI as more like a coworker than a tool.10

Among extensive adopters, 66 per cent expected fundamental changes to operating models.11

Stanford economist Erik Brynjolfsson takes the trajectory further, predicting that by 2050 most workers will command workforces larger than today’s biggest multinationals – not of people, “but fleets of AI agents – digital workers.”12

How will managers govern hybrid teams of human employees and AI agents?

The near-term picture is already taking shape. AI agents scheduling, triaging, drafting, and escalating – not waiting for instructions but acting within defined boundaries.

As The Economist observed in February 2026, the “organisational rewiring” that new technology demands has “barely begun”.13

The changes ahead are larger than the changes so far – and the management capabilities required to navigate them are fundamentally different from what most organisations are building today.

How does AI create more work for human managers?

Why does AI efficiency actually drive up human demand?

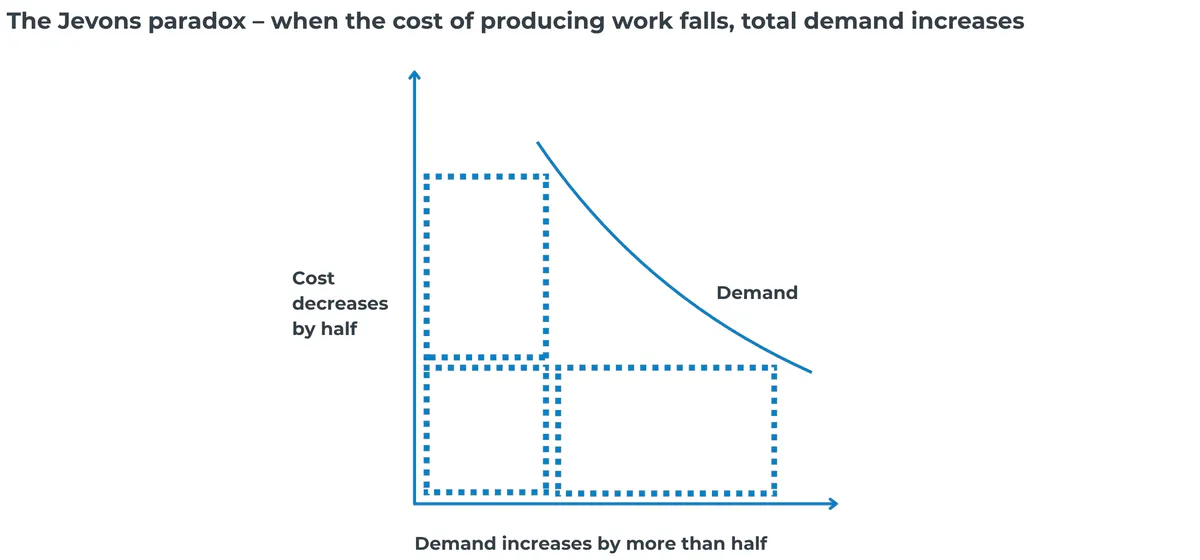

In 1865, William Stanley Jevons observed that James Watt’s more efficient steam engine did not reduce coal consumption – it increased it. When a resource becomes cheaper to use, people use more of it.14

The pattern has reliably repeated across successive waves of productivity technology. Stanford economist Erik Brynjolfsson points to aviation: jet engines made pilots dramatically more productive, demand for air travel exploded, and the profession needed more pilots, not fewer.15

While Brynjolfsson argues AI will similarly drive up demand, he warns that simply installing the technology is not enough.

Productivity growth disappointed for years after personal computers became widespread. Gains materialised only after firms reorganised business models around the technology – not from adoption alone.

Why is the legal profession increasing headcount despite AI automation?

In March 2023, global economics analysis from Goldman Sachs estimated that 44 per cent of legal tasks were technically automatable, placing law near the top of exposed professions.16

A few years later, the on-ground reality in Australia looks somewhat different than the prediction.

Law firm Allens reported it increased non-partner fee-earners by 11 per cent to a record 1,078 while deploying more than half a dozen AI tools. Across the AFR Law Partnership Survey, 70 per cent of 53 firms increased lawyer numbers.17

At Holding Redlich, research tasks that once took four and a half hours were completed in 35 minutes.18

Law firms needed more, not fewer lawyers – to provide guidance, manage AI outputs, verify quality, and meet the demand that AI-driven productivity created.

How does AI create entirely new categories of risk?

In February 2026, the Financial Times reported a surge in AI-generated employee grievances in the United Kingdom.19

Complaints that once ran to the length of an email were arriving as 30-page submissions, citing legislation from the wrong jurisdiction and legal precedents that did not exist. Employment tribunal cases increased 33 per cent in three months.

Employees were generating complaints they would never have written themselves – because the effort-barrier had previously deterred them. AI removed the friction, and volume exploded.

The question confronting managers is not whether AI will reduce their workload but what it will replace it with. The evidence so far points to increased output, complexity, and risk – and more demand for the human judgement required to navigate all three.

Learning & Development Specialist, Arrow Energy – Resources and energy

When AI multiplies output, human oversight multiplies with it

Sarah Hudson uses AI extensively for training scenarios, shift coordination, and communications, but only for tasks she already knows well enough to catch errors.

“I don’t ask it to do things I don’t already know,” she says. “What I’m looking for is time saving. I can usually tell if it’s not right, and I’ll correct it.”

But faster output means more to verify. When one piece of work becomes thirty, the checking doesn’t shrink – it multiplies.

“You still have to check it all,” Hudson says. “It’s still a bot. It’s still technology. It’s not human.”

The problem is compounded by how most people prompt. Hudson sees users asking AI only for confirmation rather than requesting it challenge their thinking.

“People use it in a very narrow way. They’re not saying, ‘challenge me on this’ or ‘ask me questions that test my understanding.’ They just want it to tell them what they want to hear.”

Why is AI a leadership challenge, not a technology one?

The instinctive response is technological – deploy the tools, train people to use them, measure adoption.

But adoption without judgement is how organisations end up moving fast in the wrong direction.

Why does the automation-versus-augmentation distinction matter for workforce strategy?

Nobel laureate Daron Acemoglu identified the core misalignment. Organisations are directing AI too heavily toward automation – fewer people, same output – and not enough toward augmentation, where AI handles the mechanical while humans handle the judgement.20

The tech-sector restructures may be following this automation logic. However, IML’s engagement with organisations across the broader Australian economy suggests augmentation delivers stronger outcomes – while also placing greater demands on the people leading it.

What does AI-driven management disruption actually look like on the ground?

CHRIS KANE

L&D Manager, EACH – Community health

When anyone can use AI to build training instantly, who decides if they should?

AI can generate training content in minutes – which Chris Kane feels can create a governance problem for L&D leaders responsible for ensuring workforce development is consistent, current, and aligned with organisational standards.

Even before AI tools entered the picture, Kane saw subject matter experts being encouraged to create their own e-learning materials. “I’ve been in organisations where everyone just gave it a go and it was chaos – is the information current, does it meet our policy, does it meet our brand?”

AI has made it frictionless.

“A trend I’m seeing from many vendors is promoting this very idea,” Kane says. “E-learning is the default solution, inefficiency is the problem, and putting authoring tools in the hands of more people is the answer – whilst ignoring the L&D expertise required before, during, and after.

“There’s a reason L&D experts and strategists exist. Everyone’s focused on the how and the efficiency. You need people overseeing the what and the why.”

ABHIJIT GUPTA

Chief Technology Officer, The Environment Protection Authority (EPA) Victoria – State government environmental regulator

The adoption curve that kept declining – and what it took to recover

EPA Victoria has officially adopted Microsoft Copilot to around 40 per cent of the organisation. Initial uptake levels reached 80 to 90 per cent, but over time it started to decline.

“To uplift adoption levels, lunch-and-learn sessions including discussions on prompt engineering, with Capgemini (EPA’s IT Managed Services partner) and Microsoft, helped to improve awareness and increase adoption levels” Abhijit Gupta says.

“Every time we organised education and awareness sessions, we observed the adoption levels go up. It would sustain for a while, then decline slightly however not to the same level it was before. Change adoption takes time!”

Each recovery required not just tool skills but helping staff understand how AI applied to their actual work. EPA is considering establishing a community of practice where staff share what works in the context of their own roles.

“People can explain what they’re doing, other people learn from their colleagues’ experiences and they can say, ‘Let me try it within the context that I operate in.'”

Deploying the technology, Gupta found, is the straightforward part. Sustaining meaningful use requires ongoing leadership commitment that many organisations may not adequately plan for.

Future scenarios: Will your management framework survive what comes next?

The case studies in this report involve AI already reshaping work in Australian organisations.

The scenarios that follow have not yet arrived in most organisations – but the structural

conditions producing them are visible now.

Purpose and identity

AI tools now handle much of the analytical work the team used to do, and productivity is up. But the work that used to give people a sense of expertise and value – the thinking work – is now done by a machine; they feel more like reviewers than creators.

How does a manager maintain a sense of purpose and meaning when the work that defined people’s contribution has been automated, and how might L&D and HR support that shift in identity?

Volume and decision fatigue

AI has made the team dramatically more productive; they’re generating more analysis, more options, more outputs than ever before. The upside is clear – but the manager is now drowning in decisions, with every AI output needing review, judgement, sign-off.

The bottleneck has shifted from production to evaluation. How does a manager handle the increased cognitive load, and decide what to engage with deeply and what to trust?

New work emerging

AI has automated the routine parts of a role, taking low‑value tasks off people’s plates and freeing up capacity. Instead of eliminating the job, it creates space for more strategic thinking, relationship-building, and creative problem-solving – but the employee doesn’t know how to fill that space.

The old job had clear tasks; the new one is ambiguous. How does a manager help someone step into higher‑value work they’ve never been trained for?

Team structure and roles

AI capabilities are enabling a leaner structure: Traditional role boundaries don’t map cleanly anymore; some jobs are disappearing, others are morphing into something unrecognisable, and new roles don’t yet have job descriptions.

How does a manager redesign team structure when the ground keeps shifting? What do titles and responsibilities even mean in this context, and what role do L&D and HR play in making those changes coherent and fair?

Authority and hierarchy

An AI system is generating strategic recommendations that are faster, more comprehensive, and more accurate than what the human team usually produces – and the team knows it. On one level this makes decision‑making easier, but it also raises questions: does the AI become the de facto decision-maker?

If a human disagrees with the AI’s recommendation, on what basis do they override it? How does a manager frame this relationship – is the AI a tool, a colleague, a superior – and what happens to human authority when the machine is more capable?

Hiring and investment

AI promises to automate significant parts of certain roles in the near term, potentially reducing the need for headcount. A manager still needs to fill a role now but knows there’s a reasonable chance it will be substantially automated within 18 months.

Is it ethical to hire someone into that uncertainty? How do you have that conversation? And more broadly – how do you invest in developing people when you can’t predict which skills will still be valuable in two years, from both a line‑manager and L&D/HR perspective?

Are HR and L&D leaders prepared for the AI workforce shift?

Many organisations are struggling to successfully integrate the technology into their workforce.

In February 2026, a National Bureau of Economic Research paper surveying nearly 6,000 senior business executives across the US, UK, Germany, and Australia found that more than 90 per cent reported no impact of AI on their firm’s employment over the past three years and 89 per cent reported no impact on labour productivity.21

The tools may work, but the capability to extract value remains the challenge.

How wide is the AI capability gap in Australian workplaces?

An Australian study by people2people Recruitment found that 70 per cent of employees felt their employer was not adequately preparing them for an AI-driven future.22

Only 17 per cent of employers had offered AI training or support of any kind.

CHRIS KANE

L&D Manager, EACH – Community health

How do you build workforce capability when the ground keeps shifting?

For L&D leaders, AI creates a planning problem most organisations have not yet solved: how do you build workforce capability when you cannot predict what the landscape looks like in 18 months?

Chris Kane is already watching the technical skills his teams were trained on being absorbed by AI.

“The tasks and skills as we knew them aren’t going to be as important anymore,” he says.

“I can’t be building professional development pathways around things like Excel reports when people are just going to get AI to do that.

“What I need to focus on is the human behaviours and the values we need to be bringing to our organisation.”

Why does the AI readiness gap start with HR and L&D leaders themselves?

HR and L&D leaders sit at the centre of this shift. They are the ones responsible for building workforce capability. And in an AI-driven environment, that means making the case upward to executive teams that adaptive capability is a long-term strategic investment, not a training line item.

It also means building downward – equipping managers with the frameworks to lead through disruption, and through them, preparing frontline teams for work that is changing faster than most development programs can keep pace with.

The risk is not just inaction. It is confident action in the wrong direction – teams adopting AI tools without the judgement to know when to trust the output and when to override it.

The question is what that judgement actually consists of, and which capabilities AI makes more valuable, not less.

Why does AI make human judgement more valuable, not less?

Why is the market rewarding experienced managers in AI-exposed roles?

A February 2026 study by the Federal Reserve Bank of Dallas examined 205 US occupations and found that AI replicates codified knowledge23 – the kind that can be written down, systematised, and taught from a textbook.

AI does not replicate tacit knowledge – the judgement, situational awareness, and contextual understanding that can only be developed through experience.

The study found that in occupations where experience commands the highest wage premium, AI exposure is associated with positive wage growth.

The market is already pricing in what the unbossing thesis ignores: experienced human judgement becomes more valuable in the presence of AI, not less.

The OECD reached the same conclusion from an institutional angle. In a 2024 analysis of AI-exposed occupations, the skills most in demand were management and business skills – and demand was increasing over time.24

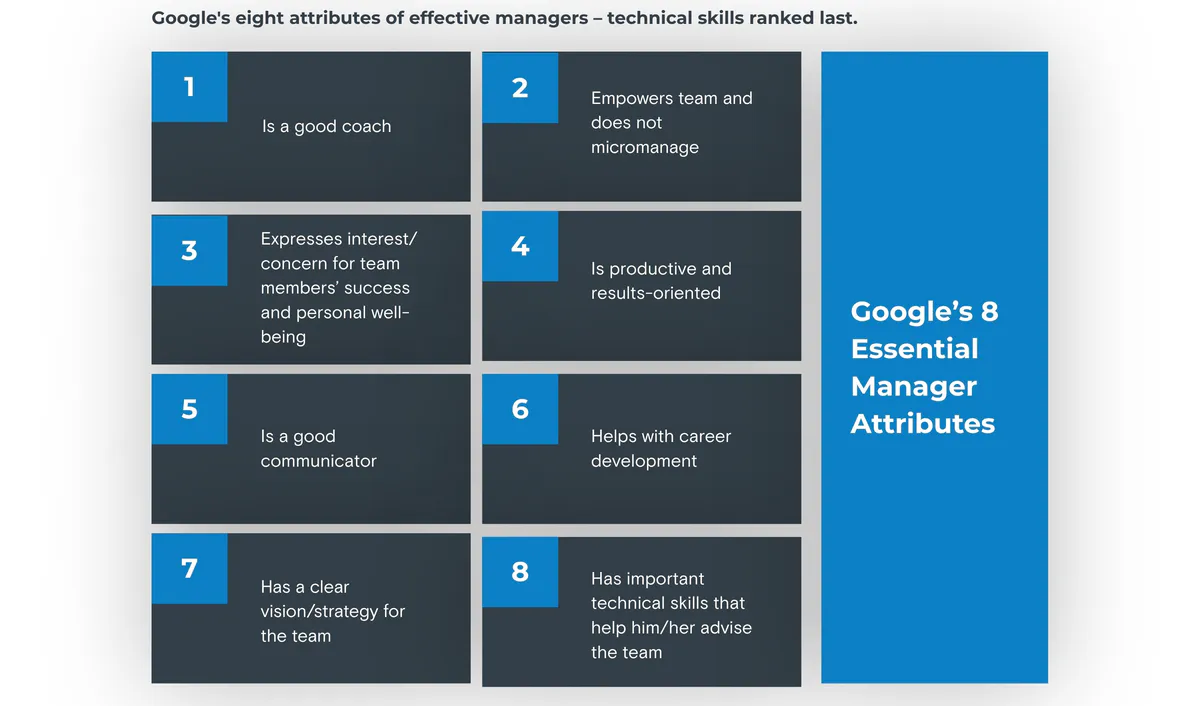

Why did Google's attempt to eliminate managers fail – and what did they learn?

Google offers an instructive proof point from the technology sector itself. In the early 2000s, convinced that engineers were best left to their own devices and that managers were at best a necessary layer of bureaucracy, the company attempted to eliminate management roles entirely.25

The experiment lasted only months. With no managers in place, employees went directly to founder Larry Page with expense questions, interpersonal conflicts, and day-to-day problems the founders had no capacity to resolve.

Google’s subsequent research – Project Oxygen – identified eight attributes of effective managers. All eight were fundamentally human capabilities. Technical skills ranked last.

Where does AI capability end and human judgement begin?

As AI absorbs more of the codifiable work, the capabilities that remain hardest to replicate become more central to what managers do.

The table below draws on the Dallas Fed’s codified-versus-tacit framework, the Google Project Oxygen findings, and the operational experience described by the executives and leaders interviewed for this paper.

| What AI handles (codified knowledge) | What managers must bring (tacit knowledge) |

|---|---|

| Data processing, pattern recognition, report generation | Interpreting data in context, questioning assumptions, knowing what the numbers miss |

| Scheduling, administrative coordination, information routing | Reading team dynamics, sensing when someone is struggling, intervening before problems escalate |

| Drafting, summarising, synthesising written material | Judging quality, identifying what’s missing, deciding what the organisation should actually say |

| Generating options, modelling scenarios, benchmarking | Making the call when the data is ambiguous or contradictory – and standing behind the decision |

| Monitoring compliance, flagging policy breaches | Navigating the grey areas where policy does not have an answer and judgement must fill the gap |

| Producing fast, confident, articulate outputs | Slowing down to ask whether the output is right and whether the organisation should act on it at all |

How does adaptive leadership equip managers to lead through AI-driven uncertainty?

By Scott Martin, General Manager, Learning, Development & Membership, IML

The challenges described in this paper share a common feature – AI-generated output that overwhelms governance, adoption curves that decline without sustained investment, the growing demand for judgement that AI cannot provide.

None has a technical solution. Each requires people to change how they think about their work, their authority, and their relationship to a technology that is reshaping both.

This is the territory of adaptive leadership. The framework was pioneered by Ronald Heifetz, founder of the Center for Public Leadership at Harvard Kennedy School, who drew the distinction between technical problems, where the solution is known and can be implemented through existing expertise, and adaptive challenges, where the solution must be worked out by the people going through it.

AI is a technology shift. But the challenge it creates for managers is fundamentally about uncertainty and change, exactly the conditions adaptive leadership was built to address.

Why does a fixed playbook fail when the problem keeps changing?

Most management training teaches leaders to diagnose a problem, select a solution, and implement it. AI disruption does not operate on that logic.

The problem shifts as the technology evolves, and the human response shifts with it. What managers need is not a better playbook but the core discipline of adaptive leadership – navigating conditions where no playbook yet exists.

How does IML apply adaptive leadership to AI deployment?

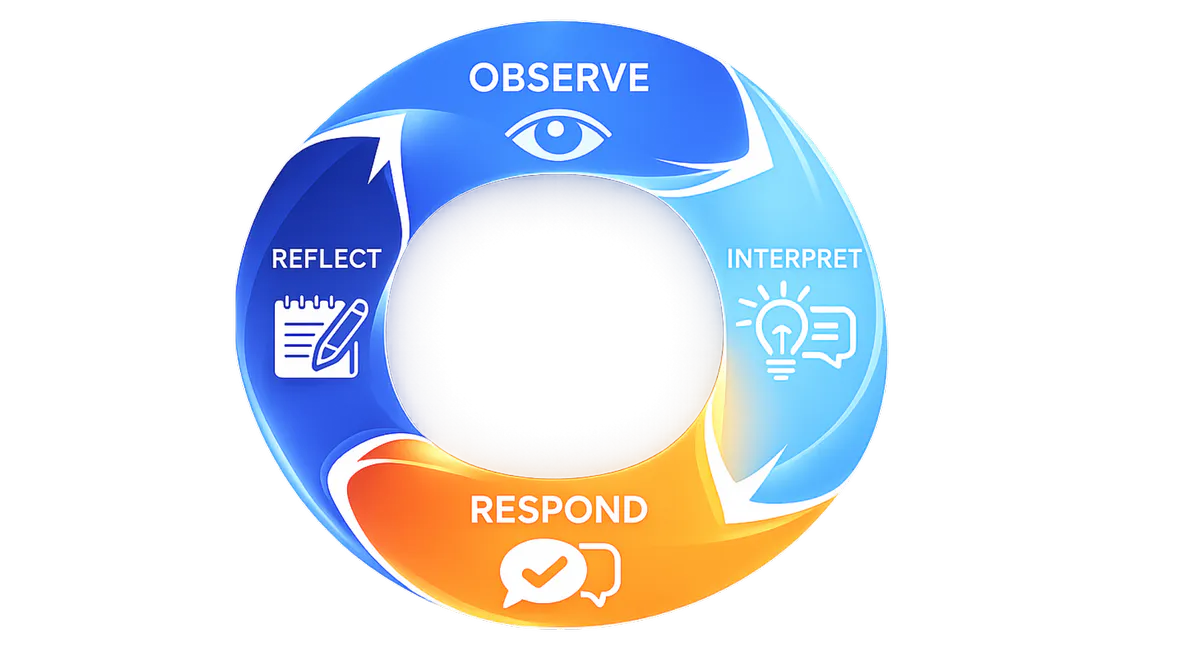

IML’s adaptive leadership framework operates through four steps, Observe, Interpret, Respond, Reflect (OIRR), forming a continuous cycle rather than a sequence to be completed once.

OIRR is IML’s application of adaptive leadership in practice: a discipline for leading through challenges where the instinct to default to familiar responses must be resisted and replaced with deliberate, evidence-informed judgement.

Observe – Before acting on AI-generated outputs or responding to AI-driven disruption, stop. See the situation as it actually is – talk to people at every level, look beyond the dashboard, catch yourself before repeating the approach that worked last time in conditions that no longer apply.

Interpret – AI delivers synthesis that is averaged, flattened, and confident. Human interpretation sees what sits beneath the surface – why someone is resisting a change, what the political dynamics are, whether this is a technical problem with a known fix or an adaptive challenge that requires people to change.

Respond – AI provides options fast and recommends confidently. The human work is knowing which option fits this context, these people, this moment. A technically correct decision that no one was brought through is a decision that will be resisted. Responding in an adaptive leadership context means bringing people through the change, not announcing it.

Reflect – The AI landscape shifts fast enough that every decision is a learning opportunity. Without structured reflection, organisations keep applying yesterday’s solutions to tomorrow’s problems. Reflection makes iteration systematic – not an annual review, but a continuous discipline.

What happened when the same robot was deployed into two aged care facilities – and produced opposite results?

SANDRA HILLS OAM

CEO, Benetas – Aged care and allied health provider

Deploying companion robots: How context determines outcomes

Sandra Hills deployed Abi, a companion robot from Australian robotics firm Andromeda, across two Benetas aged care facilities.

The technology was identical. The outcomes were not – and the difference mapped directly to the OIRR cycle.

Observe. At the first facility, Benetas deployed Abi without adequately assessing ground-level readiness. “We didn’t do enough preparation, change management preparation,” Hills says. “And the staff expressed some concern about the impact on existing roles.”

At the second facility, the organisation assessed the environment first – a general manager was assigned to lead the process, the leadership team was briefed, and the context was understood before the technology arrived.

Interpret. The resistance at Facility 1 was not a rational objection to a robot. Staff whose professional value was tied to direct care saw a machine entering their domain without explanation.

“The same robot. The same technical specifications. Two entirely different

results. The variable was not the technology. The variable was adaptive leadership.”

The real issue was an identity challenge, not technology resistance. At Facility 2, that interpretation informed every subsequent decision.

Respond. At the second facility, the framing was explicit: Abi was there to augment human care, not replace it. Staff were involved in the process. Leadership was physically present — not dropping the technology off and leaving.

The response addressed the adaptive challenge, not just the technical deployment.

Reflect. Staff at the second facility were involved in the evaluation and could provide feedback. Hills describes the ongoing discipline: “It’s not set and forget. It’s important to understand who’s going to have carriage of it? Who’s going to monitor it? Who’s going to evaluate it?”

The deployment has continued for more than two years – an ongoing project requiring sustained leadership attention.

The same robot. The same technical specifications. Two entirely different results. The variable was not the technology. The variable was adaptive leadership.

Where should HR and L&D leaders start – and what are the six priorities that matter now?

By Sam Bell, CEO, Institute of Managers and Leaders (IML)

This paper has made the case that AI is not replacing managers, it is reshaping what management means. The challenge is adaptive, not technical. The OIRR framework provides the discipline. The question remaining is where to start.

The six priorities below reflect the concerns we are hearing most consistently across the organisations we work with, through this research and through our broader conversations with industry.

Some organisations are already confronting these challenges. Others will encounter them as AI adoption deepens. There is no settled playbook for what comes next, but these are the areas where HR and L&D leaders can begin building readiness now.

1. Build visibility and checkpoints into AI-driven decisions

AI accelerates decisions at every level. The risk is that decisions are made quickly, confidently, and without verification. Organisations need structured pauses before major decisions – moments where speed is deliberately traded for judgement.

They also need flow-through: strategic direction set in the boardroom must connect to what is actually happening on the ground, and ground-level experimentation should be visible to leadership. Without that alignment, there is a risk organisations end up with executives setting AI strategies no one follows and frontline teams adopting tools no one governs.

2. Design governance for iteration, not permanence

AI capabilities are evolving rapidly. A policy written in January may be obsolete by April. AI governance should be treated as a living document with compressed review cycles and event-based triggers – not a static framework that becomes an obstacle rather than a safeguard.

Australia’s own Voluntary AI Safety Standard was updated from 10 guardrails to 6 essential practices just over a year after its initial release.26

ABHIJIT GUPTA

Chief Technology Officer, The Environment Protection Authority (EPA) Victoria – State government environmental regulator

Governance that evolves along with technology

EPA Victoria rolled out Microsoft Copilot as its designated GenAI productivity tool, but Abhijit Gupta knew that the proliferation of AI tools presents ongoing challenges. “A variety of AI tools are now easily accessible, but we don’t want our staff accessing unsafe sites for anything related to their work” he says.

“Take the example of DeepSeek. The Federal Government and the Victorian Government have classified DeepSeek as posing an unacceptable level of security risk. So we had to put technical controls in place so that people could only access sites which were deemed to be safe.”

The result is a practical tiered approach – tools the organisation actively encourages, tools it tolerates but doesn’t endorse, and tools it blocks outright – designed to be updated as new tools emerge and government directives change.

“People need to understand what is okay to use and what is unsafe.”

3. Keep roles and structures aligned with the real work

AI does not eliminate whole jobs cleanly. It hollows out parts of many roles while creating new work that does not fit existing job descriptions. An organisation that restructures based on the org chart rather than how work is actually being done risks building a structure that is already obsolete.

Before posting a vacancy, review whether the role still reflects reality. Before restructuring a team, map how AI has actually changed the work – who is doing what, what has been absorbed, and where new demands have appeared that no one owns.

4. Capture freed capacity and invest it in human capability

When AI takes 10 to 20 per cent of someone’s job, the freed-up time does not automatically flow somewhere valuable. Without deliberate intervention, the productivity gain can dissipate.

The priority is to identify freed capacity proactively and redeploy it into the capabilities AI does not replace – judgement, critical thinking, adaptive leadership. The purpose of AI-driven efficiency is to be better, not to do less.

Learning & Development Specialist, Arrow Energy – Resources and energy

AI solved the logistics that made face-to-face training possible

Sarah Hudson needed to schedule follow-up coaching workshops for 66 leaders across complex alternating shift patterns, including 15-days-on/13-off, 5-days-on/2-off, and other rotating rosters. Mapping overlapping availability manually would have taken days – and that complexity might have meant defaulting to a video or online session instead.

Hudson fed the shift parameters into ChatGPT. “I probably spent 30 to 40 minutes inputting instructions,” she says. “I told it to ask me questions, fact-check me if I was wrong, double-check anything it wasn’t sure about.” She got back a six-tab spreadsheet mapping every participant to available sessions across six months.

AI did not eliminate the work. It broke through a bottleneck that would otherwise have made the training impractical. The result was four half-day face-to-face coaching workshops – human connection that would not have happened without AI handling the complexity that stood in the way.

5. Manage the human transition – identity, fear, and resistance

When AI absorbs the work that defined someone’s professional role, the threat is not only economic. The organisations interviewed for this paper described the same pattern: resistance that looked like reluctance to adopt a tool but was actually a response to losing the work that gave people professional purpose.

Treating AI deployment as a technical rollout misses the point. The human transition – how people experience the change, not just how the technology is implemented – is where deployment often succeeds or fails.

6. Embed adaptive thinking organisationally

Capability trapped in individuals does not spread. An organisation can invest in leadership development and build sophisticated frameworks – but if managers return to an environment that operates the same way, the learning fades.

The priority is to make adaptive leadership how the organisation thinks – building it into KPIs, meeting rhythms, decision-making processes, and performance conversations. Not something some people know, but how the organisation operates.

The case for acting now

The technology is shifting faster than planning cycles can accommodate. The organisations that invest in adaptive capability now – systematically, not piecemeal – will be the ones that capture AI’s value over the long term.

If this paper has raised questions about how your organisation is preparing its managers for the shift ahead, IML works with HR and L&D leaders to build adaptive leadership capability across their organisations.

IML Australia

IML New Zealand

© Institute of Managers and Leaders 2026. All rights reserved. Developed in partnership with Media Collateral.